How human-aware AI could save us from the robopocalypse

IDG NEWS SERVICE: AI should relate to people as an apprentice, not a tool, one researcher says

Much virtual ink gets spilled each week enumerating the many horrors that could be ours in an AI-filled world, but top researchers in the field are already thinking ahead and making plans to ensure none of that happens.

Human-aware

In particular, the importance of making artificial intelligence "human-aware" has come to be viewed as a top imperative for the field, earning it special status as an official theme of the International Joint Conference on Artificial Intelligence taking place this week in New York.

"It's crucial that we design smart systems to work well with people," said Harvard professor Barbara Grosz during a panel discussion at the conference on Tuesday. "It's an imperative, not an option."

AI should be a complement for human intelligence, not a replacement, Grosz said. As such, an ability to understand who it's interacting with and respond accordingly -- such as by explaining the decisions it makes -- is essential.

"Human intelligence is rooted deeply in social interaction," Grosz said. "Without progress on human-aware AI, in many situations you won't have the 'I' in AI."

Such sentiments were echoed by the other experts participating in the panel.

Working together

While humans' strengths include compassion, value judgment and an understanding of social benefit, AI excels in bias elimination, anomaly detection and population analysis, said Guruduth Banavar, vice president of cognitive computing at IBM Research.

Humans and machines working together have the potential to "scale the notion of expertise across many fields," he added.

To make that happen, key capabilities needed in human-aware AI include both autonomy and the ability to operate in group environments with a shared focus, said Kenneth Forbus, a professor at Northwestern University.

Other important characteristics are "language sufficient to express beliefs and hypotheticals" along with the ability to build models of the intent of others and a strong interest in helping and teaching, he said. In general, human-aware AI should relate to people as an apprentice, not a tool, and learn and adapt over time, Forbus added.

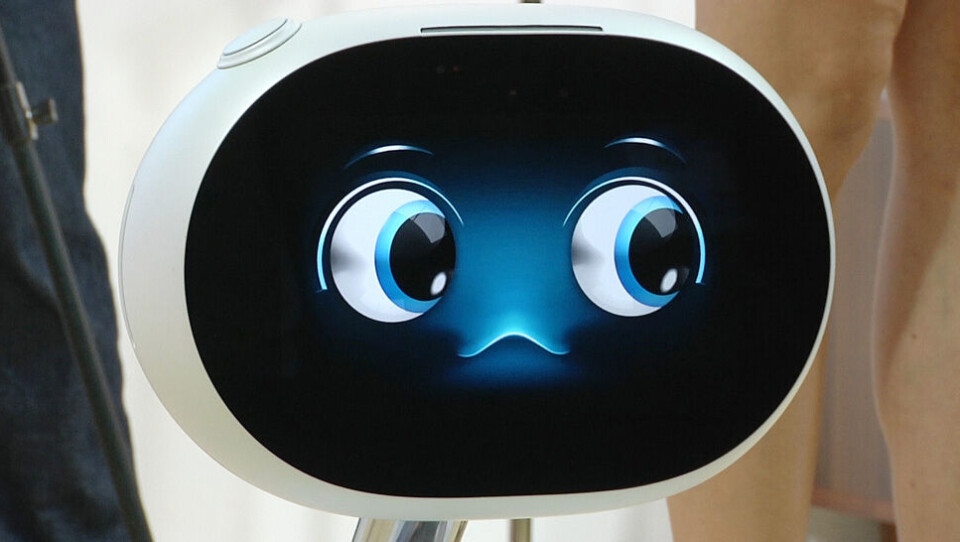

Carnegie Mellon professor Manuela Veloso highlighted the implications of human-aware AI for mobile robots, which are her research focus.

Such robots may be designed to deliver packages in an office, say, or to escort humans from one office to another. Sometimes, they need human help, such as for understanding an order or even pressing an elevator button. For them, human-centered planning could mean choosing a route that's not just best in terms of minimizing distance but also best in terms of the availability of potential human helpers, she said.

Transparency

Also important for such robots is transparency, or a means of revealing the robot's internal state, she added. "A person can only infer by looking at the robot what it's doing," Veloso explained.

Signals such as lights and the ability to articulate what they're doing could help give humans a better understanding, she said, but it remains "a very challenging problem."

In general, participants at the conference downplayed many of the most common fears about AI as vastly overblown, particularly given all the research and development that still has to happen.

"We get to invent this future," said Arizona State professor and IJCAI 2016 program chair Subbarao Kambhampati during a press conference on Wednesday. "Why invent a dystopian one?"